From tactile touch to touching screen

Teaching programming to children with SEND may at first seem quite daunting. Programming a computer can seem a rather abstract task. Text on a screen, interspersed with various bits of punctuation. All recognisable but somehow a bit jumbled up. It may translate to an action, an animation in front of you or in the response of a device – a robotic arm, or a drone. Or maybe in creating something on a 3D printer. How the writing, either as lines of code, or blocks of commands dragged together, makes this happen can be a challenge to perform, and even to understand. This is especially true for some learners with special educational needs and disabilities (SEND). Although there is something inherently satisfying for them in achieving it. And for their teachers in getting them there.

Teaching Programming to Children with SEND – How to get Started

Understanding Directions

Often I start some distance away from the computers. Perhaps in the playground, or maybe in the school hall, using two by two square grids created with chalk or masking tape. Or using the painted ones many outdoor spaces have now. I get the learners used to the idea of giving directions in precise language. In pairs, taking it in turns, they either give or respond to the commands to follow a route around the squares. Often it is simply ‘two (steps) forward, turn right, two (steps) forward’ to get to the opposite corner. However, more elaborate routes can be found. By blocking paths or specifying a set number of moves. Or even facing backwards, a degree of complexity can be introduced.

Depending on their age and ability many children will find it hard to give, or respond, to these instructions except as one at a time. They may find it extremely difficult to formulate an entire route. Or to retain it in their heads to follow it. They can be aided by using a dry wipe board and walking through it. Or physically laying out the route with a series of cardboard arrows.

Understanding Sequences

The purpose of this activity is to help them to understand the idea of a sequence of instructions to get from one point to another. This is essential in using programmable machines, such as the Blue-Bot, effectively and is the next step in developing an understanding of programming.

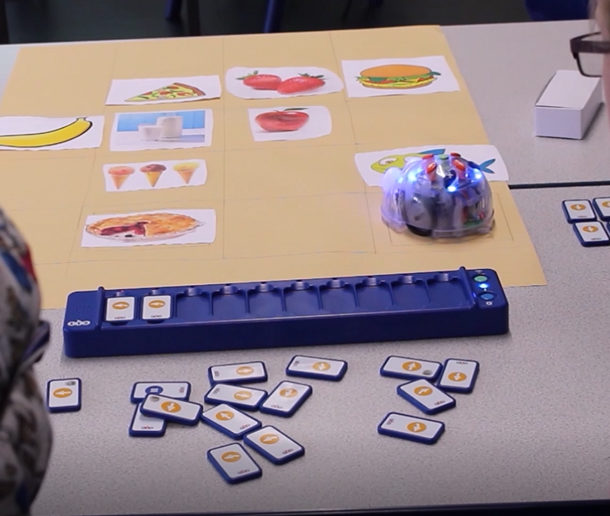

Here, I again layout a two by two grid, usually with masking tape on a table, only this time at Blue-Bot scale, two forward moves per side. Similarly we start with one step at a time tapped into the device, building up the program as we go. Then we work out the instructions in advance, using dry wipe boards, or print outs of the buttons on individual pieces of card. The use of pre-printed floor mats, or big sheets of paper precisely measured in one step squares, can increase the complexity, or act as the next stage in the process.

These sorts of activities are great for group work and for developing language skills, as pupils work out routes, solve problems, build their approaches, correct their mistakes, predict what will happen then adjust and amend. As they watch the Blue-Bot follow the path they can see the course they plotted played out in front of them. Then they share in the celebratory ‘Beep, beep,’ on its conclusion.

Using the Tactile Reader

For some learners programming directly onto the Blue-Bot can be a challenge. Perhaps in remembering what they have pressed. This might be because there is no immediate, visible reinforcement of what they have done until they press ‘Go.’ This is where the Tactile Reader comes in.

This is a bar with ten spaces for tiles. Each tile is a Blue-Bot command – a press on a button. When working out a program, learners can lay out the tiles along the route, then load them along the bar. The tiles can be oriented in two ways depending on whether you want them to be read horizontally or vertically. This latter configuration is closer to how many programs work on screen. Through a Bluetooth connection the commands are conveyed to the device. A red light comes on as each is executed, so it is easy to follow the steps. Debugging is a case of replacing or reordering the tiles.

Whilst the Blue-Bot and the Tactile Reader are both designed for primary school children just beginning to explore computing, I have used them up into Key Stage four with SEND students as a means to get them started too. The design can be seen as cartoon-ish, rather than child-ish. Furthermore, the operation gives it a purpose beyond that of a toy. Again promoting collaboration, problem-solving and language skills as they discuss approaches and resolve – or debug – the errors that occur.

The on-screen apps, available on iPad and Android, reinforce this, too. The different levels of programming allow for support and complexity, initially operating the Blue-Bot. Then simply controlling the virtual Blue-Bot on the tablet or smartphone and completing some of the challenges.

The Advantages of Using Blue-Bot to Teach Programming to SEND Children

A lot of thought has gone into the Blue-Bot, and the supportive materials. Without such devices it would be very difficult to help learners make the necessary conceptual moves to program in the less concrete, more abstract, environment on screen. It offers immediacy and quick access to the subject. It also provides opportunities for more complex challenges – try getting two (or more) to do a synchronized dance. And they offer a lot of fun and a deserved sense of achievement.

‘Beep, beep.’

With thanks to John Galloway for writing this post. John is a specialist in the use of technology to improve educational inclusion, particularly for children and young people with special educational needs. He works for Tower Hamlets as an Advisory Teacher, and also as a freelance consultant, trainer and writer.